I’ve been revisiting some of my UI elements recently, tidying up some old bits-and-bobs, testing out a few new ones and looking again at controller input. One issue I keep encountering involves getting meaningful information back and forth from the objects in-scene to the cursor and other elements in screen space…

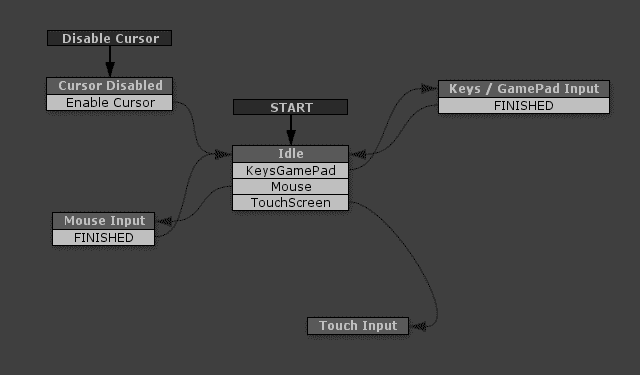

I have the visible game-cursor separate from the actual mouse-cursor, I keep track of the mouse at every frame but only update the cursor at specific times to match. At certain points in the game I want to have direct control over the cursor’s position regardless of where the mouse is, I might want it restricted to a certain area or being physically affected by a character or the environment. I also want to support various different control systems but with the ability to swap them around at any stage. To this end, I have the various control methods engaged only at the point of input, an idle state detects key presses, axis events etc. then switches to the relevant input-state accordingly:

This hopefully means that, at some later stage, I could also drop in a plug-in for handling touch input or some yet-to-be-invented custom controller and it shouldn’t effect anything else, it’s also make it straightforward to remove or disable certain inputs for specific platforms.

The drawback with this approach is you occasionally have to recreate some basic mouse-cursor functionality instead of using OnMouse events, and this is where you sometimes encounter the issues of jumping between the various “spaces”.

Unity has a bunch of different ways of contextualizing positions:

- world space – In relation to the worlds origin at 0,0,0

- self space – Assumes an objects parent as 0,0,0

- screen space – in pixels, taking bottom left as 0,0

- viewport space – normalized screen space, 0,0 to 1,1

There are more but, to be honest, I find these confusing enough. Essentially, your mouse exists in screen-space, a flattened-out plane the same size in pixels as the screen resolution, viewport space is a normalized version of screen-space. In Unity you can convert between screen and world using an offset and Camera.WorldToScreenPoint, this takes a point on the screen’s plane, moves into the scene a little bit and then returns the position that that would be in world space.

So, as an example, I use 3d objects for my UI, when you move the mouse over an object, a pop-up appears with options to click on. As they’re attached to scene-objects they exist in world-space, but I’d rather they were positioned more consistently and in the same context as the the cursor.

The steps I go through to get that to work are:

- Raycast from the screen position of the cursor

- Get the world position of the hit object

- Convert that back to a screen position at an offset

- Position the UI element at this new world position

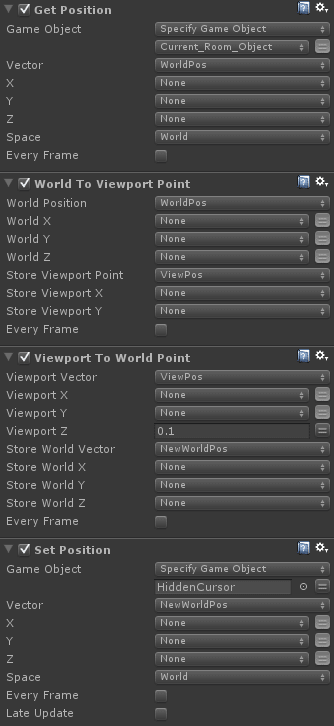

I was initially using a series of actions in Playmaker to do this:

…but, as I’ve recently started dabbling in custom actions, I’ve now wrapped the whole process up into just one. To confuse matters a little, I used Viewport here instead of screen and I honestly can’t remember why now! I don’t think it matters in this context though, as long as it’s internally consistent, the important thing here is to “flatten” your transforms to the space you want your UI in.

You can grab this action on the Playmaker forum here: http://hutonggames.com/playmakerforum/index.php?topic=11358.0 via Playmaker’s in-editor search or on Snipt.

When I was setting up a quality settings menu a while back I placed all the buttons under the same parent as my visible cursor, this made it easy to set the cursor transforms to match when skipping through them. This was fine for flat buttons, but I had an idea to replicate this kind of interface for scene objects:

I thought this might be a good way of speeding up certain interactions, as the player explores an environment and discovers certain hotspots, they are added to an array, tapping a key or a shoulder button skips the cursor through starting with the nearest in screen space.

Using the above steps it was relatively straightforward to get the objects working very similarly to the buttons; cycle through the array of objects, get the world position, convert to screen space with an offset to match the cursor, then place the cursor at the corresponding world position.

To find which object was the nearest the cursor, I used a slightly altered Array List Get Closest Game Object action, replacing the references to position with World Point To Screen Point equivalents. I’ll probably go back and replace the settings menu setup now, this way is much more accommodating if you’re moving stuff around!

As ever, comments, questions, crits and corrections are all more than welcome…